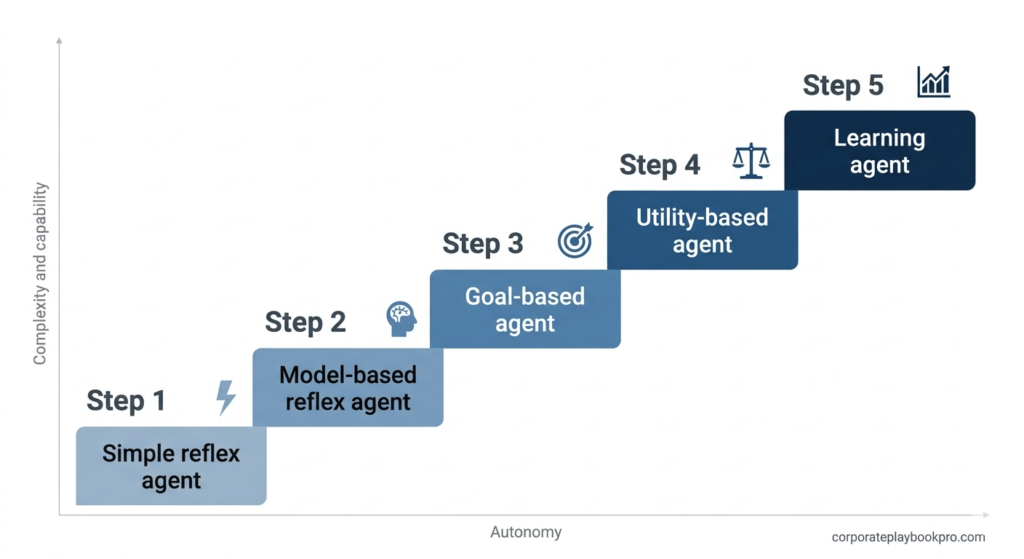

There are five classic types from foundational AI research: simple reflex, model-based reflex, goal-based, utility-based, and learning agents. In modern enterprise practice, these are extended with an autonomy-level framework — human-in-the-loop, human-on-the-loop, and fully autonomous — which adds three further meaningful distinctions.

Every software vendor selling you AI right now calls their product an “agent.” Your CRM has one. Your customer service platform has one. Your new HR tool has one. But here is something almost none of those vendors will tell you: there are fundamentally different types of AI agents, built on different architectures, with radically different capabilities, risk profiles, and failure modes — and deploying the wrong type for the wrong job is one of the most expensive mistakes a business can make in 2025.

This guide cuts through the noise. Whether you are a CTO evaluating infrastructure, a strategy lead building a business case, or an exec trying to get your bearings before your next vendor meeting, this is the reference you have been looking for.

The stakes are not small. The AI agents market was valued at $7.84 billion in 2025 and is projected to reach $52.62 billion by 2030 — a compound annual growth rate of 46.3%. That growth is already inside your organisation, whether you have invited it or not. According to PwC, 79% of organisations have already adopted AI agents in some capacity, and 66% of those report measurable productivity gains. The organisations that are not seeing those gains are not using worse technology — they are using the right technology in the wrong way. Most of the time that comes down to one thing: deploying an agent type that does not match the risk profile of the task.( References Fellow ,ScienceDirect)

What is an AI agent — and what it is not

Before we talk about types, let us establish what an AI agent actually is, because the term is being applied so loosely right now that it has started to lose meaning.

An AI agent is a software system that can perceive its environment, make decisions, and take actions to achieve a defined goal — with some degree of autonomy. The key word is autonomy. A chatbot that responds to a prompt is not an agent. A workflow that fires a Zap when a form is submitted is not an agent. An agent is a system that can determine what to do next, not just what to say next.

How an AI agent differs from a chatbot or automation tool

A chatbot reacts. An agent acts. When you ask ChatGPT a question, it generates a response. When you deploy an AI agent on the same task, it receives the query, determines what information it needs, retrieves it from relevant sources, decides how to respond, takes any required downstream action — sending an email, updating a CRM record, escalating a ticket — and logs what it did. The output is not just a response. It is a completed workflow.

Traditional automation tools, like robotic process automation (RPA), follow fixed scripts. They are deterministic — the same input always produces the same output. AI agents are different because they reason. They can handle inputs they have never seen before, adapt to changing conditions, and choose between multiple possible paths to a goal.

The three things every AI agent has in common

Regardless of type, every AI agent is built around three core capabilities. It perceives — taking in information from its environment, whether that is a user message, a data feed, an email inbox, or a connected system. It decides — evaluating that information against its goals and determining the best course of action. And it acts — executing that decision, whether that means responding, writing, retrieving, calling an API, or triggering another system.

What changes across agent types is how sophisticated each of those three capabilities is, how much memory the agent has, and how much human oversight is built into the loop.

Why the type of agent you choose changes everything

Gartner predicts that 40% of enterprise applications will be integrated with task-specific AI agents by the end of 2026, up from less than 5% today. Gartner That adoption curve is moving faster than most organisations’ understanding of what they are actually deploying.

The consequence is predictable. Gartner projects 40% of AI projects will fail by 2027 due to escalating costs, unclear business value, and inadequate risk controls.(Reference Arcade Blog ) A significant share of those failures will not be failures of technology — they will be failures of fit. The wrong agent architecture deployed in the wrong context, at the wrong autonomy level, without the right governance structure.

Understanding agent types is not an academic exercise. It is the foundation of every intelligent deployment decision you will make.

There are two frameworks you need to know. The first is the classic five-type taxonomy, which describes what an agent is and how it makes decisions. The second is the modern autonomy-level model, which describes how independently an agent operates and what role humans play. Together, they give you a complete picture of the landscape.

The 5 classic types of AI agents — and what they mean in practice

These five types come from foundational AI research, specifically Russell and Norvig’s landmark work Artificial Intelligence: A Modern Approach, which remains the most cited framework in the field. Every modern AI agent — including the LLM-powered systems your vendors are selling today — is built on one of these architectures, even if no one tells you so.

1. Simple reflex agents

What it is. A simple reflex agent acts on what it can see right now. It takes in the current input, applies a predefined rule, and produces an output. There is no memory of past interactions, no planning, no learning. It is pure if-then logic.

Business example. A customer support bot that categorises incoming tickets by keyword — “refund” goes to billing, “broken” goes to technical support — and routes them accordingly. The moment the ticket is categorised, the agent’s job is done. It does not remember the previous ticket. It does not get better at routing over time.

Where it works. High-volume, low-variability tasks where the rules are clear and exceptions are rare. Email triage. Basic data classification. Automated alert systems.

Honest limitation. The moment the environment changes — a new product category, an unusual customer phrase, an edge case the rules do not cover — a simple reflex agent fails or routes incorrectly. It has no mechanism for handling what it was not programmed for. The next type was built to solve exactly this memory problem.

2. Model-based reflex agents

What it is. A model-based reflex agent does everything a simple reflex agent does, but with one critical addition: it maintains an internal model of the world. It tracks context over time, which means it can handle situations where the current input alone is not enough to make a good decision.

Business example. A fraud detection system that does not just look at a single transaction in isolation but considers the pattern of transactions across time — location, amount, frequency, merchant category — and flags anomalies based on what “normal” looks like for that account. It is still rule-based at its core, but the rules operate on a richer, contextualised picture of reality.

Where it works. Monitoring and anomaly detection. Customer behaviour analysis. Inventory management systems that track stock levels across multiple variables over time.

Honest limitation. The internal model still needs to be defined and maintained by humans. If the real world changes in ways the model does not account for — a new fraud pattern, a seasonal behaviour shift — the agent’s decisions degrade without a model update. But tracking context is still not the same as pursuing a goal — and that is where the third type comes in.

3. Goal-based agents

What it is. A goal-based agent does not just react to the current situation — it evaluates possible actions against a defined goal and selects the action most likely to achieve it. This requires planning. The agent can look ahead, consider sequences of actions, and choose a path.

Business example. A sales outreach agent that has a goal of booking discovery calls. It evaluates a prospect list, considers what time of day, what channel, and what message has historically driven responses for that segment, sequences a multi-touch outreach, and adjusts its approach based on what is working. It is not following a fixed script — it is pursuing an objective.

Where it works. Any task that involves sequencing decisions toward a defined outcome. Sales automation. Project scheduling. Supply chain optimisation.

Honest limitation. Goal-based agents can optimise aggressively for the stated goal in ways that create unintended consequences. If the goal is “book calls” with no quality constraint, the agent may pursue low-quality prospects at high volume. The goal definition is everything — and getting it wrong produces results that are technically successful but commercially counterproductive. When a single goal is not enough and trade-offs must be made, the fourth type takes over.

4. Utility-based agents

What it is. A utility-based agent goes a step further than goal-based agents by not just pursuing a single goal but maximising a utility function — a measure of how desirable different outcomes are. This allows it to make trade-offs. It can weigh competing objectives, handle uncertainty, and choose the action that produces the best expected outcome across multiple dimensions.

Business example. A dynamic pricing engine that simultaneously optimises for revenue, inventory clearance, competitive positioning, and customer lifetime value. It does not just set the highest possible price — it balances multiple objectives in real time based on current conditions.

Where it works. Complex optimisation tasks where multiple objectives must be balanced simultaneously. Logistics and routing. Pricing. Resource allocation across competing demands.

Honest limitation. Utility functions are hard to design correctly. What you tell the agent to optimise for and what you actually want are rarely the same thing, and the gap between them only becomes visible after the agent has been running long enough to exploit it. This is the agent type most prone to “specification gaming” — technically doing what it was told while producing outcomes you did not intend. The one thing a utility-based agent still cannot do is get better at its job over time — which is what separates it from the fifth and most sophisticated type.

5. Learning agents

What it is. A learning agent improves over time. It has a performance element that evaluates how well it is doing, a learning element that identifies what needs to change, and a problem generator that explores new strategies. Unlike the previous types, it does not just operate within a fixed model — it updates the model based on experience.

Business example. A customer churn prediction system that continuously refines its model as new customer behaviour data comes in, improving its predictions as it accumulates evidence. Or a content recommendation engine that learns from engagement signals — clicks, time spent, scroll depth — and improves its recommendations with every interaction.

Where it works. Anywhere that performance improves with data. Recommendation systems. Predictive analytics. Personalisation engines. Adaptive customer service systems.

Honest limitation. Most organisations are still navigating the transition from experimentation to scaled deployment, and while they may be capturing value in some parts of the organisation, they are not yet realising enterprise-wide financial impact. (Reference McKinsey & Company) Learning agents require clean, sufficient, and representative data to learn from. A learning agent trained on biased or incomplete data will optimise in the wrong direction — confidently. The quality of what the agent learns is entirely dependent on the quality of what it is taught.

These five types form the architectural foundation. What determines how much trust you give them in production is an entirely different framework — and that is what we cover next.

The Modern Autonomy Framework — what AI vendors actually mean in 2026

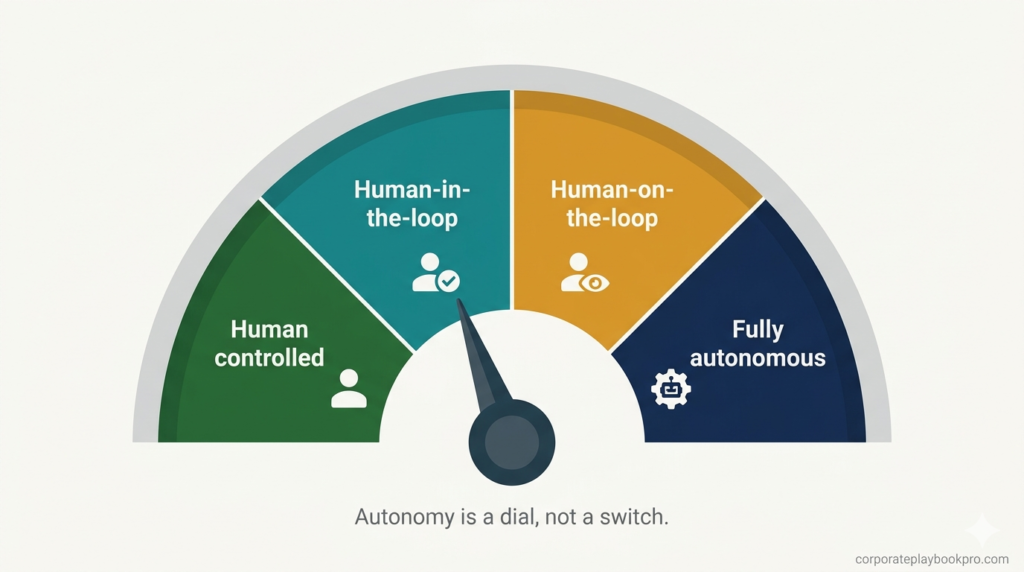

The five classic types describe what an agent is. The autonomy framework describes how independently it operates. In 2025, this is the dimension that matters most to business leaders, because it directly determines your risk exposure, your governance requirements, and your implementation path.

Twenty-three percent of organisations report they are scaling an agentic AI system somewhere in their enterprise, with an additional 39% in experimental phases. (Reference McKinsey & Company )But across all of those deployments, the single most important variable is not which type of agent is being used — it is how much autonomy it has been granted, and whether that autonomy level is appropriate for the task.

The autonomy spectrum

Think of agent autonomy as a dial with four positions, not an on-off switch.

At one end is full human control — the AI is a tool. A human makes every decision; the AI only assists. At the other end is full autonomy — the AI acts end-to-end with no human in the loop for individual decisions. Between those extremes are two critical operating modes that most enterprise deployments should live in.

Human-in-the-Loop (HITL)

The agent executes tasks, but a human must review and approve before consequential actions are taken. The agent does the work; a human holds the gate. This is the right starting position for any high-stakes deployment — finance, legal, HR, customer-facing communications — where a wrong decision has real consequences that are difficult to reverse.

Business example. An accounts payable agent that processes invoices, flags discrepancies, and prepares payment instructions — but requires a human approval before any payment is executed. The agent does 90% of the work. A human carries the accountability for the 10% that matters most.

Human-on-the-Loop (HOTL)

The agent acts autonomously, but a human monitors and can intervene. No approval is required for routine actions, but the system provides visibility and the human can override. This is appropriate for tasks that are high-volume, time-sensitive, and where the cost of occasional errors is manageable.

Business example. A customer service agent that resolves tier-one support queries autonomously — password resets, order status, basic troubleshooting — while a supervisor dashboard shows all interactions in real time and flags anything with a low-confidence score or a negative sentiment signal for human review.

Fully autonomous agents

The agent operates end-to-end with no routine human checkpoints. Humans are involved in design, oversight, and periodic auditing — but not in individual decisions. By 2028, at least 15% of day-to-day work decisions will be made autonomously through agentic AI, up from 0% in 2024. (Reference Datagrid )

Full autonomy is appropriate for low-stakes, high-volume, highly reversible tasks where the cost of human review exceeds the cost of occasional errors. It is not appropriate for decisions that carry legal, financial, reputational, or safety consequences until a substantial track record of reliable performance has been established.

How to Deploy AI Agents With Minimal Risk With Progressive Autonomy Path

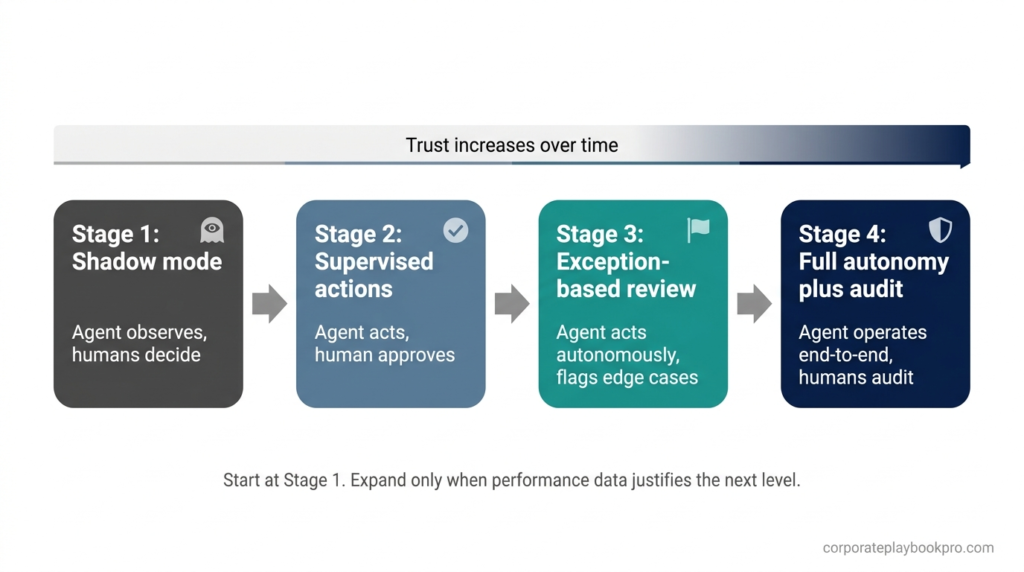

Deploying AI Agents with minimal risk is one of the Biggest Challenge the businesses are facing — the answer is Progressive Autonomy. Progressive autonomy is not a type of agent. It is a deployment strategy, and it is the most important concept in this entire guide for any business that is serious about deploying agentic AI responsibly.

The principle is simple: start with maximum human oversight and reduce it incrementally as performance evidence accumulates. BCG’s experience shows that effective AI agents can accelerate business processes by 30% to 50%( Reference BCG )— but those gains are only realised by organisations that build the trust infrastructure first, not those that grant full autonomy on day one and hope for the best.

In practice, this means beginning in shadow mode — the agent operates in parallel with humans, generating recommendations without taking action — then moving to supervised action, then exception-based review, and finally full autonomy with audit trails. Each stage provides performance data that justifies the next level of trust.

All AI agent types compared — side by side

| Agent type | Memory | Planning | Learns | Autonomy level | Best for | Risk profile |

|---|---|---|---|---|---|---|

| Simple reflex | None | None | No | Low | High-volume rule-based tasks | Low — predictable failures |

| Model-based reflex | Short-term | None | No | Low-medium | Monitoring, anomaly detection | Low-medium |

| Goal-based | Contextual | Yes | No | Medium | Multi-step workflows, outreach | Medium — goal specification risk |

| Utility-based | Contextual | Yes | No | Medium-high | Optimisation, pricing, routing | Medium-high — specification gaming risk |

| Learning | Long-term | Yes | Yes | Medium-high | Personalisation, prediction | High — data quality dependency |

| HITL deployment | Any | Any | Any | Medium | High-stakes, regulated tasks | Managed — human gate retained |

| HOTL deployment | Any | Any | Any | High | Scalable operations, CS | Medium — monitoring dependent |

| Fully autonomous | Any | Any | Any | Maximum | Low-stakes, high-volume | High — audit trail essential |

Use this table as a reference before any vendor conversation. When a vendor tells you their product uses “AI agents,” this is the framework that lets you ask the right follow-up questions.

Which type of AI agent is right for your business?

The honest answer is that most organisations need more than one type — and the right type depends on the task, not the vendor. Here is how to think through the decision by business priority.

If your priority is speed and volume — start with simple reflex or model-based agents

If you are processing thousands of similar inputs every day — support tickets, invoice approvals, data entry, content categorisation — and the rules are well understood, a simple or model-based reflex agent delivers the fastest ROI with the lowest implementation risk. These are not glamorous. They do not learn. But they are reliable, auditable, and cheap to run at scale.

If your priority is accuracy and compliance — build around HITL goal-based agents

Regulated industries — finance, healthcare, legal, insurance — should build their initial agentic deployments around goal-based agents with human-in-the-loop governance. The agent handles the analysis, preparation, and sequencing. A licensed human retains the decision authority. According to Deloitte’s 2025 survey, 85% of organisations increased their AI investment in the past 12 months (Referece:Deloitte )— but among regulated industries, the ones generating measurable returns are those that matched their autonomy level to their compliance requirements from the start.

If your priority is learning and adaptation — invest in the data foundation first

Learning agents are the most powerful and the most demanding. BCG’s research shows that AI agents account for about 17% of company-wide AI value in 2025, with that share expected to almost double to 29% by 2028 (Reference: BCG) — and learning agents will drive a disproportionate share of that growth. But they require clean, well-structured, representative data. Before deploying a learning agent in any core business function, invest three to six months in data quality, labelling, and infrastructure. The agent is only as good as what it learns from.

If you are just starting out — start here

Begin with one contained use case, high volume, low stakes, and clearly measurable. Deploy a goal-based agent in HITL mode. Run it in shadow mode for four to six weeks. Measure its accuracy against human decisions. Expand autonomy only when the error rate is demonstrably lower than the human baseline. BCG finds that leading companies focus on depth over breadth, prioritising an average of 3.5 use cases compared with 6.1 for other companies — and they anticipate generating 2.1 times greater ROI. BCG Depth beats breadth at every stage of the agentic AI journey.

Three mistakes businesses make when deploying AI agents

These are not hypothetical. They are patterns visible across hundreds of enterprise AI deployments in 2025.

Mistake 1: Choosing by capability instead of risk profile.

A vendor demonstrates an impressive autonomous agent that can execute end-to-end workflows. The demo is compelling. The business deploys it at scale before establishing what happens when it gets something wrong — and it will get something wrong. The question is never “can this agent do the task?” The question is “what is the cost of a failure, and does our current governance infrastructure handle it?”

Mistake 2: Skipping progressive autonomy.

51% of organisations using AI have experienced at least one negative consequence. (Reference :Arcade Blog) Many of those consequences came from granting autonomy before establishing a track record. Shadow mode feels slow. Supervised action feels inefficient. But the organisations that have taken the progressive autonomy path methodically are the ones that have been able to scale without the high-profile failures that set programmes back by months or years.

Mistake 3: Treating agent type as a vendor decision instead of a business architecture decision.

The most important choices in an agentic AI deployment — which type, what autonomy level, what human oversight model, what audit trail — are not made by the vendor. They are made by you. Vendors provide the technology. You define the governance. Outsourcing that decision to a vendor is not a technology failure waiting to happen. It is a governance failure waiting to happen.

Conclusion

The AI agent landscape is not going to get simpler. According to Grand View Research, the global AI agents market is expected to reach $50.31 billion by 2030, growing at a CAGR of 45.8% from 2025. The vendors will multiply. The terminology will fragment further. The demos will get more impressive and more misleading simultaneously. (Reference: Quytech)

The organisations that come out ahead are not the ones that move fastest. They are the ones that started with the right framework. Knowing what type of agent you are actually deploying — its architecture, its autonomy level, its failure mode — is the first decision in a long chain of decisions. Get it wrong and every subsequent decision is built on a misunderstanding. Get it right and you have the foundation for a deployment that scales, that earns trust incrementally, and that your team can actually govern.

That is what this guide was built to give you. The next question — how to choose between these types when you are facing a real business decision with real constraints — is the subject of the next guide in this series: How to Select the Right AI Agent for Your Business: The Six-Angle Framework for Decision-Makers.(In Progress:: Subscribe to get the updates) It picks up exactly where this one ends.

More from CorporatePlaybookPro.com

→ Agentic AI for Small Business: Use Cases & Roadmap

→ Top 10 AI Use Cases Transforming Small & Medium Business

→ How to Use Claude AI for Business: The Complete 2026 Guide

→ What is Agentic AI? Complete Guide with Real-World Examples

Want To Know Which Platform is Best For Your Business? Contact Us Now!

Frequently asked questions : Types of AI Agents

What are the 5 types of AI agents?

The five classic types of AI agents are simple reflex agents, model-based reflex agents, goal-based agents, utility-based agents, and learning agents. This framework comes from foundational AI research by Russell and Norvig and remains the most widely used classification in both academic and enterprise contexts. Each type operates at a different level of intelligence and autonomy — choosing the right one depends on the complexity of your task, how often conditions change, and what a wrong decision would cost your business.

What is an AI agent in simple terms?

An AI agent is a software system that perceives its environment, makes decisions, and takes actions to achieve a defined goal — with some degree of independence. Unlike a search bar or a chatbot, it does not wait for step-by-step instructions. It receives an objective, determines what steps are needed, and executes them. An AI agent is a program that can autonomously act to achieve a goal, not just follow a list of commands — the key difference being its ability to reason and plan, not just respond. (Reference :Citrusbug Technolabs)

What is the difference between an AI agent and a chatbot?

A chatbot matches your question to a pre-written FAQ answer or a knowledge-base article. An AI agent understands the context behind your question, reasons across connected systems, and takes action to resolve the issue. In business terms: a chatbot answers. An agent acts. A chatbot tells a customer their order is delayed. An agent identifies the delay, contacts the supplier, updates the CRM record, and drafts the customer notification — without being asked to do each step. (Reference :DigitalOcean)

What is the difference between an AI agent and an AI assistant?

An AI assistant responds to prompts. An AI agent pursues goals. When you ask an assistant to draft an email, it drafts the email. When you deploy an agent on the same task, it identifies who needs to be emailed, determines the right message based on context, drafts it, schedules it, sends it, and logs the outcome — all without step-by-step human instruction.

How many types of AI agents are there?

There are five classic types from foundational AI research: simple reflex, model-based reflex, goal-based, utility-based, and learning agents. In modern enterprise practice, these are extended with an autonomy-level framework — human-in-the-loop, human-on-the-loop, and fully autonomous — which adds three further meaningful distinctions. The AI agents market is projected to grow from $5.1 billion in 2024 to $52.62 billion by 2030, and as the market grows, classification frameworks are expanding accordingly. Depending on the source, you will see counts ranging from five to eight recognised types.( Reference :Interface-eu)

Are large language models a type of AI agent?

No. A large language model is the reasoning engine inside an AI agent — it is not the agent itself. An AI agent is not an LLM itself, but rather a system that is equipped with an LLM. The LLM provides the agent with its conversational and reasoning capabilities. In addition to the LLM, an agent has access to tools and memory, which allow it to take actions and perform tasks. ChatGPT, Claude, and Gemini are LLMs. An agent is the full system that wraps an LLM with tools, memory, and an action loop.( Reference :Citrusbug Technolabs)

What is agentic AI and how is it different from regular AI?

Regular AI — including most chatbots and language models — responds to prompts. Agentic AI pursues goals. Agentic AI is a new breed of AI systems that are semi- or fully autonomous and thus able to perceive, reason, and act on their own — integrating with other software systems to complete tasks independently or with minimal human supervision. The practical difference for business: regular AI saves time on individual tasks. Agentic AI redesigns entire workflows by removing the human from repetitive decision loops entirely. ( Reference :Red Hat)

What is the most commonly deployed AI agent type in business today?

Goal-based agents operating in human-in-the-loop mode are the most widely deployed type in enterprise settings. Research and data extraction is where AI agents are proving most useful, with 58% of companies using them to handle information-heavy tasks. IT service management, knowledge management, and customer service automation lead adoption — all of which rely on goal-based architectures where the agent pursues a defined outcome while a human retains oversight of consequential decisions. ( Reference :DigitalOcean)

What is a goal-based AI agent and when should a business use one?

A goal-based agent evaluates possible actions against a defined objective and selects the path most likely to achieve it. Unlike simple reflex agents that follow fixed rules, goal-based agents can plan sequences of actions and adapt when conditions change. Businesses should use them when the task involves multiple steps toward a clear outcome — sales outreach sequencing, inventory reorder planning, project task allocation, or any workflow where the end goal is fixed but the path to it varies by context.

What is a goal-based AI agent and when should a business use one?

A goal-based agent has one objective and pursues it. A utility-based agent has multiple competing objectives and maximises the best overall outcome across all of them. A goal-based agent books the cheapest flight. A utility-based agent balances cost, travel time, layover duration, carbon footprint, and airline loyalty points — and makes the trade-off automatically. Use a goal-based agent when the task has a single clear outcome. Use a utility-based agent when multiple priorities must be weighed simultaneously in real time.

What is human-in-the-loop AI and why does it matter for enterprise deployments?

Human-in-the-loop AI means a human must review and approve the agent’s recommended action before it executes. The agent does the analysis, preparation, and sequencing — a human holds the gate on consequential decisions. Human review is especially important when decisions involve legal exposure, financial risk, compliance obligations, safety outcomes, or reputational damage. For enterprise deployments, HITL is the right starting position for any high-stakes workflow until a sufficient performance track record justifies expanding the agent’s autonomy. ( Reference : DigitalOcean)

What is the difference between human-in-the-loop and human-on-the-loop AI?

Human-in-the-loop means no action executes without human approval — the human is a required checkpoint. Human-on-the-loop means the agent acts autonomously but a human monitors activity and can intervene. HITL is appropriate for high-stakes, low-reversibility decisions — financial approvals, legal communications, compliance-sensitive actions. HOTL suits high-volume, time-sensitive workflows where the cost of occasional errors is manageable and monitoring dashboards provide sufficient visibility. Most enterprise AI programmes move from HITL to HOTL as performance evidence accumulates.

How autonomous should an AI agent be in a business environment?

Autonomy should match the reversibility and consequence of the decision, not the capability of the agent. Low-stakes, high-volume, easily reversible tasks — email categorization, data entry, report generation — can be fully autonomous. Medium-stakes tasks with occasional judgment calls belong in human-on-the-loop mode. High-stakes, low-reversibility decisions — payments, legal documents, compliance filings — require human-in-the-loop gates regardless of agent capability. As you move agency from humans to machines, there’s a real increase in the importance of governance and infrastructure to control and support agentic systems. (Reference :Red Hat)

What happens when an AI agent makes a mistake in a business workflow?

The consequence depends entirely on what governance was in place before deployment. Reliability planning includes what happens when something goes wrong. Full audit trails capture each step so you can trace what happened, why it happened, and how to prevent repeats. For reversible tasks, errors are corrected and logged. For high-stakes workflows without human-in-the-loop controls, a single agent error can trigger downstream consequences across multiple connected systems before it is caught. This is why audit trails, escalation paths, and human review gates must be designed in — not retrofitted after a failure. (Reference :DigitalOcean)

Are AI agents safe to use for sensitive business data?

AI agents can be deployed safely with sensitive data, but safety does not come by default — it must be engineered. Neither bespoke nor out-of-the-box agents are safe to start with. Safeguards and guardrails need to be designed, built in, monitored, and adjusted. Role-based access controls, data minimisation principles, audit logging, and human review gates on high-risk actions are the baseline requirements. Before deploying any AI agent that touches customer data, financial records, or proprietary information, organisations should define data access scope, retention policies, and incident response protocols explicitly.(Reference : WalkMe)

What is the risk of deploying the wrong agent type?

Beyond the operational risk of poor performance, there is a strategic risk: S&P Global’s data shows that 42% of companies abandoned most AI initiatives in 2025 — more than double the prior year’s rate. 1BusinessWorld The most common reason is not that the technology failed — it is that the wrong technology was deployed in the wrong context with the wrong governance model. Understanding agent types before you deploy is the cheapest risk mitigation available.

Are large language models the same as AI agents?

No. A large language model is the reasoning engine inside an AI agent — it is not the agent itself. An AI agent is not an LLM itself, but rather a system that is equipped with an LLM. The LLM provides the agent with its conversational and reasoning capabilities. In addition to the LLM, an agent has access to tools and memory, which allow it to take actions and perform tasks. ChatGPT, Claude, and Gemini are LLMs. An agent is the full system that wraps an LLM with tools, memory, and an action loop. (Reference :Citrusbug Technolabs)

How do I choose the right AI agent for my business?

Start with three questions. How reversible is a wrong decision? How variable are the inputs? How much human oversight can your team realistically sustain? Low reversibility and high stakes point to goal-based agents in human-in-the-loop mode. High volume and clear rules point to simple or model-based reflex agents. Tasks generating continuous feedback data are candidates for learning agents. Match the agent type to the risk profile of the task — not the capability demonstrated in the vendor demo. The demo is always the best-case scenario.